HackerRank Interview AI: What the Interviewer Sees (and How to Ace It)

TL;DR: HackerRank's AI-Assisted Interview gives you a fully-featured coding AI — chat, inline completions, agent mode — but your interviewer watches every prompt you send and every response you accept. The candidates who pass don't use it most; they use it in ways that show real thinking. This guide walks you through exactly what "right" looks like.

In a standard HackerRank assessment from three years ago, you were on your own: problem, compiler, timer. Today, if a company enables the AI-Assisted Interview format, you're handed something closer to a Cursor or GitHub Copilot setup — AI chat, inline completions, multi-turn agent mode — all running inside HackerRank's IDE. Sounds like an edge. It is. But there's a catch most candidates don't find out until it's too late: the interviewer sees the whole transcript in real time.

That changes the game completely. Here's how to play it right.

HackerRank's Built-In AI Is Not a Cheat Code

When HackerRank enables the AI-Assisted IDE, you get three capabilities:

Chat interface — Ask questions about the problem, your code, or specific files. You tag the problem statement to give the AI context.

Inline code completions — Suggestions appear as you type, similar to GitHub Copilot. You can accept or ignore them.

Agent mode — Multi-turn conversations where the AI can write code, edit files, and perform actions from prompts. Useful for complex, multi-step tasks.

The supported models include Claude-sonnet-4.6, Gemini Flash/Pro, and GPT-5. So technically it's powerful AI.

But here's what HackerRank explicitly tells interviewers: the platform generates "comprehensive reports for each interview" showing exactly how you interacted with the AI. Code duplication rates (a metric that spikes 4x when candidates blindly paste AI output) are highlighted. Interviewers are trained to watch for candidates who "rely too heavily" on the assistant.

Using the AI isn't the test. How you use it is.

What the Interviewer Actually Sees

This is the part that surprises most candidates.

The interviewer's panel shows your entire chat history as it happens — every prompt you wrote, every AI response, every suggestion you accepted or rejected. It's not logged after the fact; it's a live feed.

HackerRank also tracks:

- Tab switching (the classic proctoring flag)

- Copy-paste events (flagged by default)

- AI interaction density — how many prompts per hour, what percentage of your code shows AI fingerprints

This means that asking the AI to "write a function that sorts a list of objects by timestamp" and pasting the result verbatim is a red flag even if the code works. You've demonstrated that you can copy and paste, not that you can solve problems.

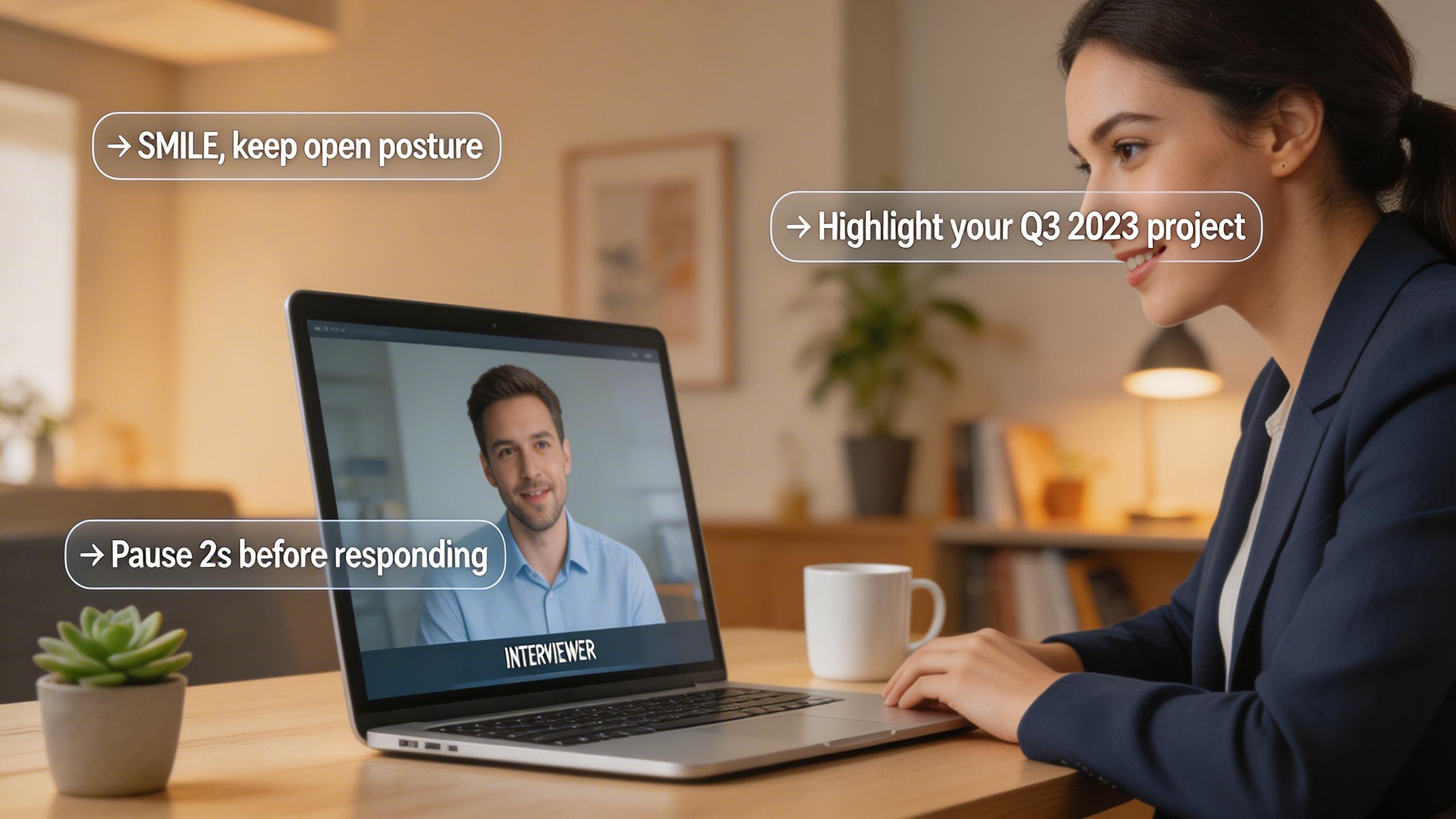

What interviewers want to see is different: someone who thinks out loud through the problem, uses AI to check edge cases or generate test scenarios, and can explain any line of their solution if asked.

The Four Moves That Signal Strong AI Collaboration

Candidates who pass AI-Assisted interviews tend to do four things that weaker candidates don't.

1. Verbalize before prompting. Before sending a message to the AI, state your current thinking in comments or out loud. "I'm thinking this needs a hash map for O(1) lookup. Let me ask the AI if there's a simpler approach." This establishes that the insight is yours; the AI is just a sounding board.

2. Ask about approaches, not answers. "What are the time complexity trade-offs between BFS and DFS for this problem type?" is a strong prompt. "Write the solution to this problem" is a signal that you're stuck and hoping AI bails you out.

3. Validate and reject suggestions actively. When AI proposes code, read it, run it against edge cases in your head, and push back if it's wrong. Interviewers are impressed when they see you catch an AI error — it shows you understand the problem better than the tool does.

4. Keep code ownership visible. Write the scaffolding yourself. Use AI for specific pieces — an unfamiliar API, a tricky regex, a test case generator — then integrate manually. The diff between "AI wrote everything" and "human wrote the structure, AI filled in a specific piece" is obvious in the transcript.

Company-Specific HackerRank Patterns

Different companies structure their HackerRank assessments differently. Knowing the pattern in advance saves time you'd otherwise spend orienting.

Amazon assessments typically include a multi-file codebase (feature implementation or debugging) plus a work simulation section. The AI-Assisted format rewards candidates who quickly understand the existing code structure before making changes.

Google tends toward algorithmic problems even in the AI-enabled format. The expectation is that you can explain time/space complexity regardless of how the code was produced.

Microsoft commonly uses bug-fixing and feature-addition tasks in realistic codebases. The AI assistant is particularly useful here for navigating unfamiliar files — but you need to understand what you changed and why.

Goldman Sachs / financial firms often include strict time limits with moderate problem difficulty. Speed matters, and using AI for syntax and boilerplate (not logic) is the legitimate lever.

How to Use AceRound AI to Prepare for a HackerRank Interview

The HackerRank AI is available during the test. AceRound AI is for before it.

The preparation gap most candidates miss is that the skills measured in an AI-Assisted interview are different from raw DSA knowledge. You're being evaluated on:

- How clearly you can frame a problem

- Whether you can spot and correct AI errors

- How well you communicate technical reasoning in real time

These are skills you can train. Using an AI interview coach during mock sessions — where it surfaces follow-up questions, checks whether your explanations are coherent, and flags when you've gone quiet too long — builds exactly this muscle.

Specifically for HackerRank prep:

- Practice explaining your approach before coding, even when no one is watching

- Do 20+ HackerRank practice problems — research shows a 50% higher pass rate threshold at that number

- Simulate the AI-Assisted environment: use Copilot or Cursor while solving problems, and deliberately practice catching its mistakes

- For company-specific prep, check Glassdoor for recent HackerRank experiences at your target company

FAQ

Can I use AI in a HackerRank interview?

Only if the company has enabled the AI-Assisted Interview format. Not all assessments include it. If it's enabled, you'll see the AI panel in the IDE. If it's not enabled, using external AI tools is a terms-of-service violation and will likely be flagged by proctoring.

Does HackerRank detect if I use AI?

In the AI-Assisted format, the interviewer sees everything explicitly — no detection needed, it's transparent. In standard assessments, HackerRank uses plagiarism detection with typing pattern analysis (93% accuracy claimed) and flags paste events. Attempting to paste AI-generated code in a standard (non-AI-enabled) assessment is high risk.

What happens if I use the AI too much in an AI-Assisted interview?

You won't automatically fail. But your AI interaction transcript will show a pattern of dense, solution-seeking prompts and near-verbatim code acceptance. Interviewers who see this typically rate candidates lower on "independent thinking" and "ability to collaborate with AI," which are now formal evaluation criteria on some rubrics.

How do I prepare for a HackerRank AI-Assisted interview in one week?

Focus on three things: (1) Do 15-20 HackerRank medium problems to calibrate your baseline speed. (2) Practice talking through your reasoning out loud while coding. (3) Simulate the AI-Assisted environment by using Copilot/Cursor on practice problems and actively critiquing its output.

Is the HackerRank AI interview hard?

Harder than a standard OA in one specific way: you have to perform your problem-solving process transparently, not just produce a correct answer. Candidates who are strong problem-solvers but poor communicators often underperform. Candidates who work methodically and narrate their thinking tend to do well.

Which AI model does HackerRank use?

The platform currently supports multiple models including Claude-sonnet-4.6, Gemini Flash, Gemini Pro, and GPT-5. Candidates typically cannot choose the model — the interviewer or company selects it during setup.

Author · Alex Chen. Career consultant and former tech recruiter. Spent 5 years on the hiring side before switching to help candidates instead. Writes about real interview dynamics, not textbook advice.

Related Articles

Real-Time AI Interview Helper: What It Does and How to Use It

A practical guide to real-time AI interview helpers — how they work during live interviews, legitimate use cases, and what to avoid.

Can HireVue Detect Cheating? What Actually Gets Flagged in 2026

HireVue cheating detection explained: tab switching, shared scripts, AI-generated answers, and looking away — what the system actually flags and what it misses.

The Honest Switcher's Guide to Finding a Final Round AI Alternative

Comparing the best Final Round AI alternatives for 2026: honest breakdown of pricing, real-time performance, detection risks, and when each tool actually makes sense.