Consulting Interview AI: What Actually Works for BCG, McKinsey, and Bain

TL;DR: Consulting interview AI tools — mbb.ai, CaseWithAI, PrepLounge's Casebot — are genuinely useful for building case volume. But AI alone won't get you through the final round. The candidates who make it to offer stage use AI for the first 70% of their prep (volume, framework drill, rapid feedback) and humans for the last 30% (judgment calibration, communication nuance, firm-specific expectations). Here's how to structure that, and which AI tools actually work for BCG, McKinsey, and Bain.

Consulting recruiting is one of the highest-pressure interview processes in the job market. A McKinsey final round involves 3–4 back-to-back case interviews where your structured thinking, communication clarity, and business judgment are all evaluated simultaneously. BCG's process involves Casey — their proprietary AI chatbot — plus live case rounds. Bain uses written cases at some offices.

Every year, candidates show up with 50+ hours of preparation and still blank during a simple profitability framework. The problem isn't effort. It's often that their prep — AI or human — didn't simulate the right kind of pressure.

This guide covers what works in consulting interview AI prep, what doesn't, and how to calibrate the mix.

What BCG Casey and McKinsey's Lilli Tell You About Where AI Is Going in Consulting

BCG officially offers Casey — its own AI chatbot — as a case interview practice tool. This isn't a third-party app; it's BCG's own product, and they want you to use it. BCG's careers page links to it directly. The signal is clear: BCG is not only fine with candidates using AI for prep, they're actively facilitating it.

McKinsey has gone further internally. Their Lilli AI platform (an internal AI assistant) is now used by consultants in their day-to-day work. Interviewers at McKinsey are themselves users of AI tools. As of 2026, there are reports that McKinsey is beginning to evaluate candidates' judgment about AI — how they think about using it, what they'd delegate to it, what they wouldn't. McKinsey's careers page doesn't announce this explicitly, but it's visible in their problem-solving interview questions.

What this means for you: firms are not going to penalize you for using AI to prepare. They're going to start expecting that you have, and that you have opinions about AI's limitations.

Three Zones: Where AI Case Interview Prep Actually Helps

Not all case prep is the same. AI tools perform differently across these zones.

Zone 1: Framework drilling and memorization (AI excels)

Learning how to structure a profitability case, a market entry case, or an M&A case requires repetition. AI can generate infinite variations of the same case type, give instant feedback on your MECE structure, and let you drill the same framework until it's automatic. This is where tools like mbb.ai, PrepLounge's AI Casebot, and CaseWithAI are strong.

Expect to spend 10–20 hours in this zone at the start of your prep.

Zone 2: Communication calibration (AI is limited)

Consulting firms don't just evaluate what you say — they evaluate how you say it. Are you clear? Concise? Do you synthesize before giving detail, or do you dump information? Can you manage the interviewer's attention?

AI tools can flag structural issues (you forgot the "so what"), but they can't accurately gauge whether your communication would land with a McKinsey partner. That requires human feedback from someone who has sat in those rooms — ex-consultants, coaches, or strong prep partners.

Zone 3: Judgment and insight (AI fails here)

The hardest part of a case interview isn't the math or the framework. It's knowing when to push deeper on a hypothesis versus when to move on, when to challenge an assumption the interviewer gave you, and when a "creative" answer is actually what they want versus when it reads as off-structure.

AI tools consistently over-index on correctness and completeness. They'll reward a technically sound answer even if it would fall flat with a human interviewer because it lacks business intuition. Don't let AI prep make you technically rigorous but commercially tone-deaf.

If you're preparing for the behavioral components of your consulting interview — the "fit" portion where they ask about your leadership, teamwork, and impact stories — AceRound AI runs structured mock sessions that give you real-time feedback on your STAR delivery. Consulting fit interviews trip up a lot of otherwise strong candidates.

The 70/30 Method: How to Structure Your Case Prep

Here's a framework that reflects how successful candidates actually use AI tools.

Weeks 1–3 (70% of prep): AI-heavy volume phase

- Run 3–5 AI cases per day using mbb.ai, CaseWithAI, or PrepLounge Casebot

- Drill your weak frameworks specifically — if market sizing takes you 8 minutes instead of 4, run 20 market sizing cases

- Use AI to practice math speed and structure under time pressure

- Generate your 5–6 core behavioral stories with AI feedback on structure

Weeks 4–6 (30% of prep): Human calibration phase

- Run 2–3 live mock cases per week with real people (ex-consultants via PrepLounge, peer practice groups, or a coach)

- Focus on communication quality, not just answer accuracy

- Identify your specific weaknesses from AI prep and target them in human sessions

- Do a full 2-case mock "marathon" — back-to-back cases to simulate the real interview cadence

The week before:

- Wind down case volume — you should be maintaining, not building

- Firm-specific research: read recent BCG/McKinsey/Bain articles, sharpen your "why this firm" answer

- Behavioral stories polished and flexible — you should be able to adapt a single story to fit 3–4 different questions

Firm-Specific Nuances AI Tools Often Miss

BCG

BCG uses the Casey chatbot officially, so you should use it. Beyond that, BCG tends to value creative hypotheses and comfort with ambiguity. They want candidates who can generate insights, not just apply frameworks. If your AI prep has made you overly rigid and framework-first, BCG cases will feel awkward. Practice generating 3–4 hypotheses before reaching for a framework.

BCG also uses written cases at some offices for first rounds. Make sure you know which format you'll face — the Casey chatbot won't tell you this.

McKinsey

McKinsey's problem-solving interview is structured differently from a traditional case. It's more like a series of analytical questions on a provided dataset and business situation. McKinsey's own prep guide covers this well — read it before you use any third-party prep tool.

For McKinsey's fit interview ("personal experience interview" or PEI), they go much deeper on a single story than most firms. Where BCG might ask about 3 different stories, McKinsey will spend 15+ minutes on one, drilling down to specifics. AI tools don't simulate this adequately. Practice with a human who knows how to drill.

Bain

Bain uses a written case component and also asks "Bain-style" open-ended business questions that reward synthesis and clarity over comprehensive coverage. Bain interviewers tend to be conversational and will give you signals — learn to read them. AI tools don't give you practice reading those human cues.

A Note on AI for Math in Cases

This needs a direct mention because it comes up: several candidates on communities like r/consulting have reported that AI tools gave them wrong math or rounded incorrectly during case practice. One comment that circulated: "AI tools resulted in poor performance, especially for structuring and math — wouldn't recommend using it as if it was a case buddy."

That experience tracks. LLM-based case tools are not reliable calculators. They can set up the math correctly but execute it wrong. Always verify case math independently. If you're using AI for case drill, manually check every numerical answer the AI gives you. Don't develop the habit of trusting AI math under pressure — you'll pay for it in the actual interview.

FAQ

Can I use AI during a consulting case interview?

No. During live rounds — whether in-person or on video — you don't have access to AI tools. The question is only relevant to prep. For prep: absolutely yes, and the major firms are actively facilitating it (BCG Casey, McKinsey's prep resources).

What's the best AI tool for case interview preparation?

It depends on what you need. For pure case volume and framework drill: mbb.ai and CaseWithAI are strong. For a large case library: PrepLounge's AI Casebot has 500+ cases. For voice-based practice (closest to the real thing): CasePrepared. For behavioral fit interview prep alongside cases: AceRound AI covers the fit component well.

How long should I prepare for a case interview with AI?

Most successful candidates spend 6–10 weeks total, with the first 3–4 weeks AI-heavy and the last 2–3 weeks human-heavy. Don't do 100% AI or 100% human — neither will prepare you fully. The exact time depends on your starting point; someone with zero case experience needs more time in Zone 1 (framework drilling) than someone with a finance or consulting background.

Does AI case interview practice count as real preparation?

Yes, for building volume and drilling frameworks. No, for developing the communication and judgment skills that win offers. Both are necessary. AI prep that has you consistently getting "correct" structured answers doesn't mean you're ready — firms evaluate how you think out loud, not just what you conclude.

Can ChatGPT help me prepare for a McKinsey or BCG case interview?

ChatGPT can generate case scenarios and give structural feedback, but it's significantly less calibrated to MBB-specific standards than dedicated tools like mbb.ai or PrepLounge Casebot. Use ChatGPT for quick brainstorming (generating hypotheses, practicing "so what" synthesis) rather than for structured mock interviews.

What do consulting firms think about AI-generated answers?

In live interviews: they expect original thinking, and AI-generated answers often sound generic in a way that experienced interviewers notice. In prep: they're fine with it and increasingly expect you to have used AI tools. The distinction that matters is whether your thinking became sharper through AI prep, or whether you just learned to deliver AI-structured responses without actually developing the underlying skill.

Author · Alex Chen. Career consultant and former tech recruiter. Spent 5 years on the hiring side before switching to help candidates instead. Writes about real interview dynamics, not textbook advice.

Related Articles

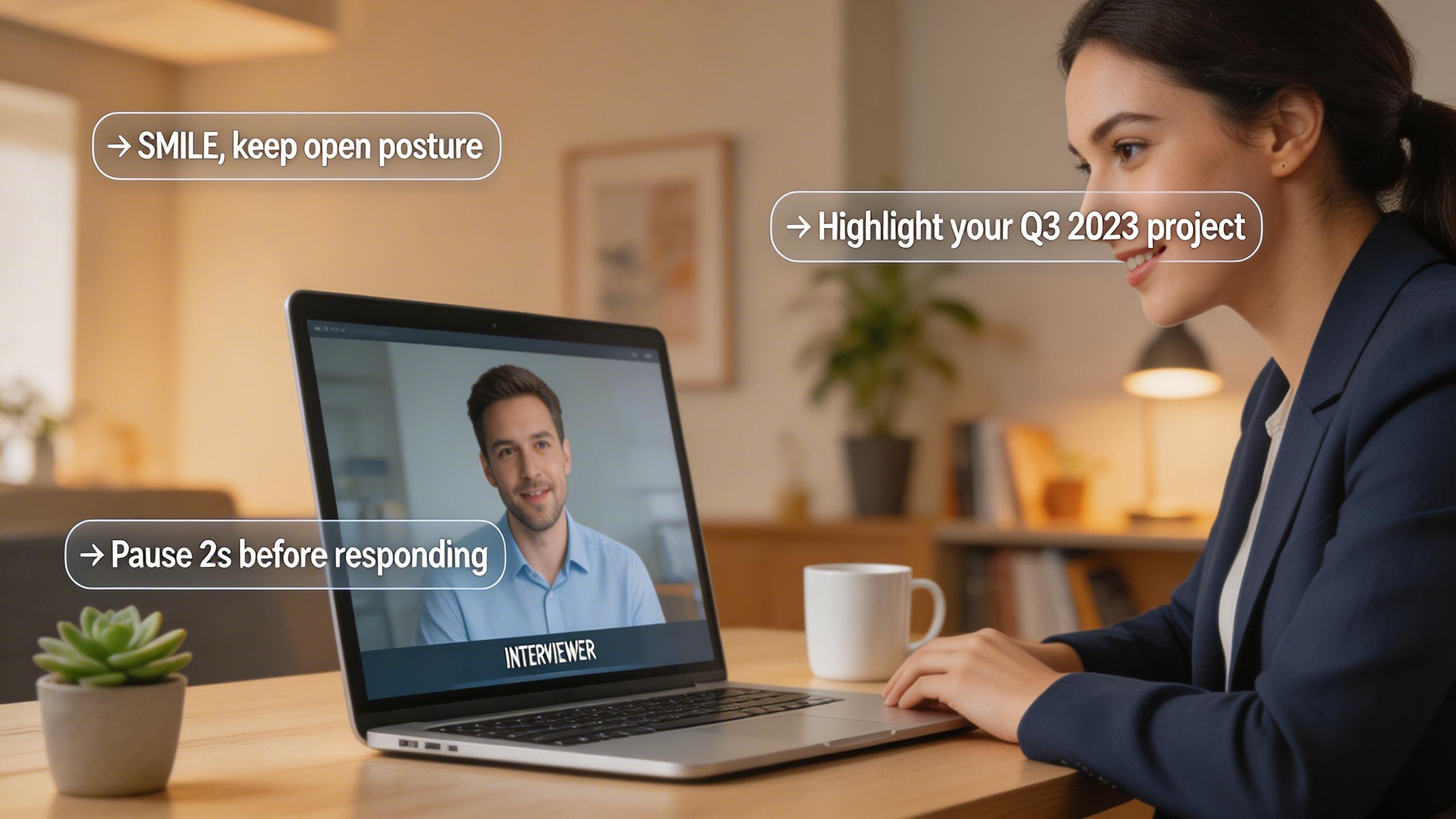

Real-Time AI Interview Helper: What It Does and How to Use It

A practical guide to real-time AI interview helpers — how they work during live interviews, legitimate use cases, and what to avoid.

Can HireVue Detect Cheating? What Actually Gets Flagged in 2026

HireVue cheating detection explained: tab switching, shared scripts, AI-generated answers, and looking away — what the system actually flags and what it misses.

The Honest Switcher's Guide to Finding a Final Round AI Alternative

Comparing the best Final Round AI alternatives for 2026: honest breakdown of pricing, real-time performance, detection risks, and when each tool actually makes sense.