Is Using AI in Interviews Cheating? The Answer Is More Complicated Than You Think

TL;DR: Using AI to prepare for an interview is not cheating — it's smart practice. Using AI to generate answers live during the interview, while presenting them as your own spontaneous thoughts, is deception. The line isn't about the tool; it's about whether you're representing genuine capability or fabricating it. This guide explains exactly where that line sits, what employers can actually detect, and how to use AI in a way that makes you genuinely better — not just temporarily better-sounding.

In early 2025, a startup founder noticed something odd during a Zoom screening: a candidate's answers were suspiciously polished, the pacing slightly unnatural. Then he spotted text reflecting off the candidate's glasses. The candidate was reading AI-generated responses in real time.

That story spread. Then came the Amazon intern whose offer was rescinded after he publicly bragged about using an AI overlay tool throughout his entire interview process. Then CodeSignal data showed technical assessment cheating had doubled in one year — from 16% to 35% of attempts.

Now 1 in 5 U.S. professionals admits to secretly using AI during live interviews (Blind, 2025). More than half say it's become "the norm."

So: is using AI in interviews cheating? And what should you actually do?

The Real Question Isn't "Is AI Allowed?" — It's "Are You Representing Yourself?"

Every ethical question about AI in interviews comes down to one thing: are you representing your own capability, or fabricating capability you don't have?

That distinction sounds simple. In practice, it gets complicated fast.

Using AI to research a company before your interview — clearly fine. Using AI to generate a STAR answer during a mock practice session, then internalizing and personalizing it — fine. Using AI to run 50 practice questions until you genuinely know how to structure behavioral answers — fine.

Using AI to generate answers live during the interview, reading them aloud while the interviewer believes they're hearing your unassisted thoughts — that's the problem. Not because "AI bad," but because you're misrepresenting who you are.

The Fabric HQ 2026 State of AI Interview Cheating report (19,368 interviews analyzed) found that 38.5% of all candidates showed cheating behavior signals. Among technical roles, that hit 48%. And critically: 61% of those flagged cheaters would still advance through standard hiring processes without AI detection tools. They'd get hired. And then what?

The Amazon intern story is instructive: he couldn't use Git on day one. The interview was a fabrication of competence he didn't have. That's not a career win — it's a trap.

What "AI Interview Cheating" Actually Looks Like in 2026

Before you can decide where you stand, it helps to understand what the spectrum actually looks like:

Overlay tools (the clearest case of cheating): Apps like Cluely, LockedIn AI, and similar tools run invisibly on your screen, capture the interviewer's audio via speech-to-text, and surface generated answers for you to read. The interviewer sees none of this. You're essentially having someone else take your interview. Cluely's founders marketed it with a viral video of an Amazon internship obtained this way — and framed it as "this isn't even cheating, LeetCode tests are useless." Whether or not the critique of LeetCode is valid (it partly is), "the test is bad" doesn't make fabricating your way through it ethical.

Deepfake impersonation (the most extreme case): Gartner predicts that by 2028, 1 in 4 candidate profiles worldwide will be entirely fake — synthetic voice, deepfake video, generated credentials. This isn't hypothetical: identity fraud in remote interviews is already documented enough that Cisco and Greenhouse partnered with identity verification company Clear.

Real-time ChatGPT in a browser tab (the grey zone): Typing the interviewer's question into ChatGPT and reading the answer is functionally the same as an overlay tool, but candidates rationalize it as "I'm still the one deciding what to say." This is still fabrication.

AI-assisted note retrieval during take-home assessments: Many companies explicitly permit notes and internet access during take-home technical screens. Using AI here is often explicitly allowed — check the rules.

AI for preparation: Generating practice questions, getting feedback on draft answers, running mock interviews with an AI — this is not cheating by any reasonable standard. It's the equivalent of hiring a coach.

Can Employers Actually Detect AI Interview Cheating?

Partly. Here's the honest picture:

What detection tools can do: Behavioral platforms like Sherlock AI and InterviewGuard use eye-tracking patterns, mouse movement anomalies, and clipboard activity to flag suspicious behavior. HackerRank and CodeSignal detect pasted solutions through keystroke dynamics and copy-paste events. Some video interview platforms analyze response latency — a pause that's exactly the length of an LLM response generation is suspicious.

What they can't reliably do: Detect polished language itself. The Wiley/IJSA peer-reviewed study "ChatGPT, can you take my job interview?" (Canagasuriam et al., 2025) found something fascinating: interviewers could sense something was off with AI-generated responses — honesty ratings dropped — but delivery scores didn't differ. Standard rubrics missed the cheat. Which means if an interviewer suspects you, they can't prove it from the rubric alone; they'll just advance someone else.

The behavioral follow-up is the real detector: Google, Cisco, and McKinsey have shifted back to in-person final rounds in part because of this. More importantly, interviewers have adapted their questions: "Walk me through a model you personally deployed to production — including the 2am incident you had to debug." Or: "That answer mentioned distributed consensus — can you whiteboard the failure modes you've personally seen?" Real experience is hard to fabricate when the follow-up questions get specific enough.

This is why AI cheating often backfires even when it "works" at the screen level. You get through the screen. You fail the technical debrief. Or worse: you get hired and can't do the job.

The Employer Hypocrisy Problem (And Why It Doesn't Resolve the Ethics)

There's a legitimate grievance here, and it deserves direct acknowledgment: companies that mandate AI for their engineers, integrate AI into their own hiring pipelines, and generate revenue from AI products are, in some cases, disqualifying candidates for using AI for 45 minutes in a coding screen.

Meta now lets candidates use an AI coding assistant in their interviews. Amazon disqualifies AI users. Google bans it for hiring but funds AI integration across all products. Cursor — an AI-native code editor company — explicitly bans AI in its own hiring process.

This is inconsistent. The "you'll use AI every day on the job but can't use it to prove you can code" tension is real, and the frustration it generates on Reddit threads is valid.

But here's the thing: that frustration, however legitimate, doesn't change the ethical calculus. The question isn't whether the interview process is perfect. The question is whether you want to start a professional relationship based on misrepresentation. The system being flawed doesn't mean fabricating your way through it serves your long-term interests.

The better response to a broken system is to get genuinely good enough that you don't need to cheat in it.

How to Use AI for Interview Prep Without Crossing the Line

Here's what ethical, effective AI-assisted preparation actually looks like:

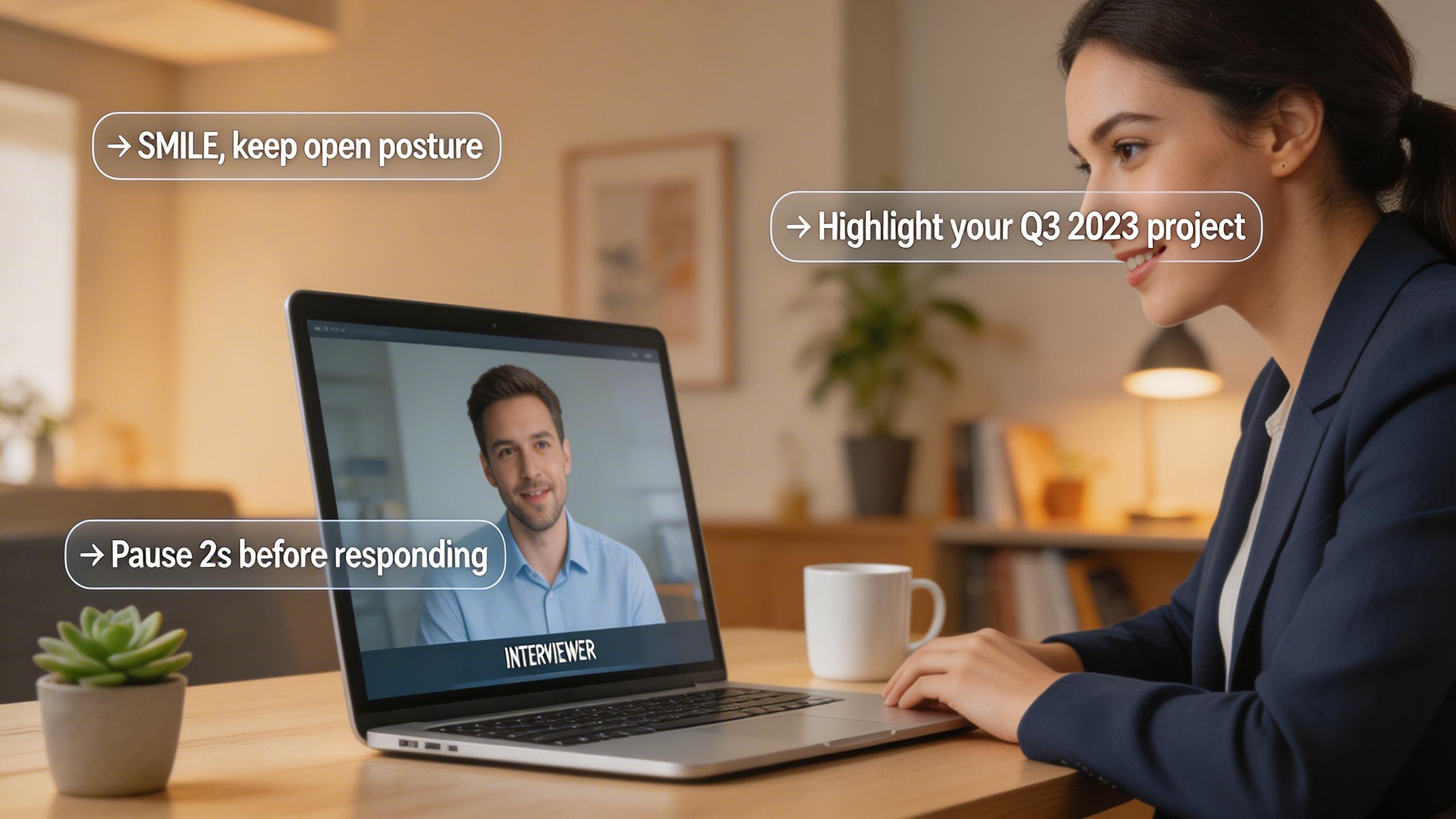

Mock interview practice: Run full interview simulations with an AI that knows your target role. The goal isn't to memorize answers — it's to understand the patterns well enough to construct good answers naturally. AceRound AI offers real-time AI interview practice designed specifically for this: you get feedback on structure, relevance, and delivery without having anything fed to you during a live interview.

STAR answer construction: Use AI to help build initial STAR answer drafts for behavioral questions based on your actual experiences. Then edit heavily — make it yours, cut the AI voice, add the specific detail that only you know. When you practice telling that story 10 times, it becomes genuinely yours.

Research and question anticipation: Have AI analyze the job description and generate likely interview questions. Research the company deeply using AI-accelerated reading. Show up knowing more about their tech stack, recent releases, and strategic priorities than any other candidate.

Weakness identification: Ask AI to stress-test your answers — "what would a skeptical interviewer push back on here?" This kind of adversarial prep is how you find the holes in your responses before the interview does.

Post-interview debriefs: Use AI after each interview to analyze what questions came up, what you answered well, what you fumbled. Build a running improvement log.

The goal: by the time you're in the interview, you don't need AI. You've already done the work.

Why "Just Prepare Better" Actually Wins

Here's the counterintuitive finding from the Wiley study: delivery quality doesn't significantly differ between AI-cheated and genuinely prepared candidates. But honesty and authenticity ratings do.

Interviewers — even when they can't articulate why — sense something is off with answers that are technically correct but lack the texture of genuine experience. The pacing is slightly off. The example is too perfect. There's no hesitation when hesitation would be natural.

Genuine preparation produces a different kind of polish: you answer smoothly because you actually know the material, you have the war stories because you lived them, you can answer the follow-up because the follow-up is asking about something real.

The 38.5% of candidates flagging for AI cheating behavior in technical screens are competing against each other for spots. The candidates who prepare genuinely — using AI as a coach, not a ghostwriter — have an increasingly clear field.

FAQ

Is using AI in a job interview cheating? Using AI live during the interview to generate answers you present as your own is deceptive, regardless of what tool you use. Using AI beforehand to prepare is not cheating — it's smart practice, equivalent to hiring a coach or using prep books.

Can companies detect if you use AI in an interview? Partially. Behavioral analytics tools, eye-tracking, keystroke dynamics, and copy-paste detection catch many cases. But the most reliable detector is human: a follow-up question that requires genuine experience to answer. Many candidates who pass AI-assisted screens get caught in technical debriefs or on the job.

Is it ethical to use ChatGPT to prepare for a job interview? Yes. Using ChatGPT to practice questions, refine answers, research the company, and simulate mock interviews is ethical. The ethical question applies to whether you're using AI during the live interview while misrepresenting it as your own thinking.

What happens if you get caught using AI in an interview? Consequences range from immediate disqualification to rescinded offers (as with the Amazon intern case) to permanent blacklisting with a recruiter or firm. Some candidates who got through via AI cheating are discovered on the job when they can't perform, leading to early termination.

Should I use AI to help me during a coding interview? Check the explicit rules of the specific company and platform first — some now allow it. If not explicitly allowed: no. Prepare deeply with AI before the interview instead. AI-assisted mock coding practice will build the skills the screen is testing for; AI-generated answers during the screen will get you to a job you can't do.

Do companies allow AI tools during technical interviews? It varies. Meta has moved toward allowing AI coding assistants. Amazon, Google, and most traditional FAANG contexts still prohibit it. Cursor explicitly bans it. Always check the specific company's current policy before assuming.

Author · Alex Chen. Career consultant and former tech recruiter. Spent 5 years on the hiring side before switching to help candidates instead. Writes about real interview dynamics, not textbook advice.

Related Articles

Real-Time AI Interview Helper: What It Does and How to Use It

A practical guide to real-time AI interview helpers — how they work during live interviews, legitimate use cases, and what to avoid.

Can HireVue Detect Cheating? What Actually Gets Flagged in 2026

HireVue cheating detection explained: tab switching, shared scripts, AI-generated answers, and looking away — what the system actually flags and what it misses.

The Honest Switcher's Guide to Finding a Final Round AI Alternative

Comparing the best Final Round AI alternatives for 2026: honest breakdown of pricing, real-time performance, detection risks, and when each tool actually makes sense.